PRODUCT

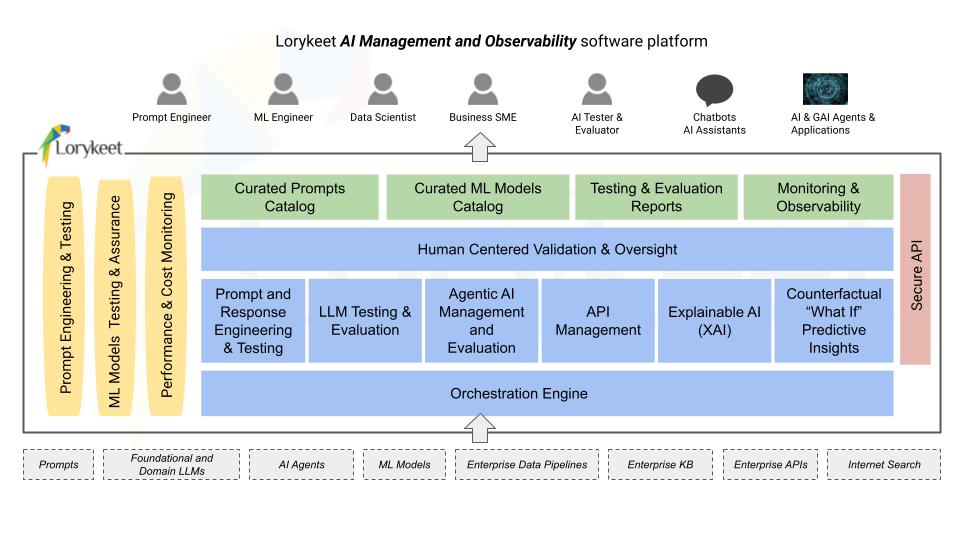

Lorykeet Platform

Unified AI Management. Smart Observability

Next-Level AI: The Foundations of Enterprise Management & Observability

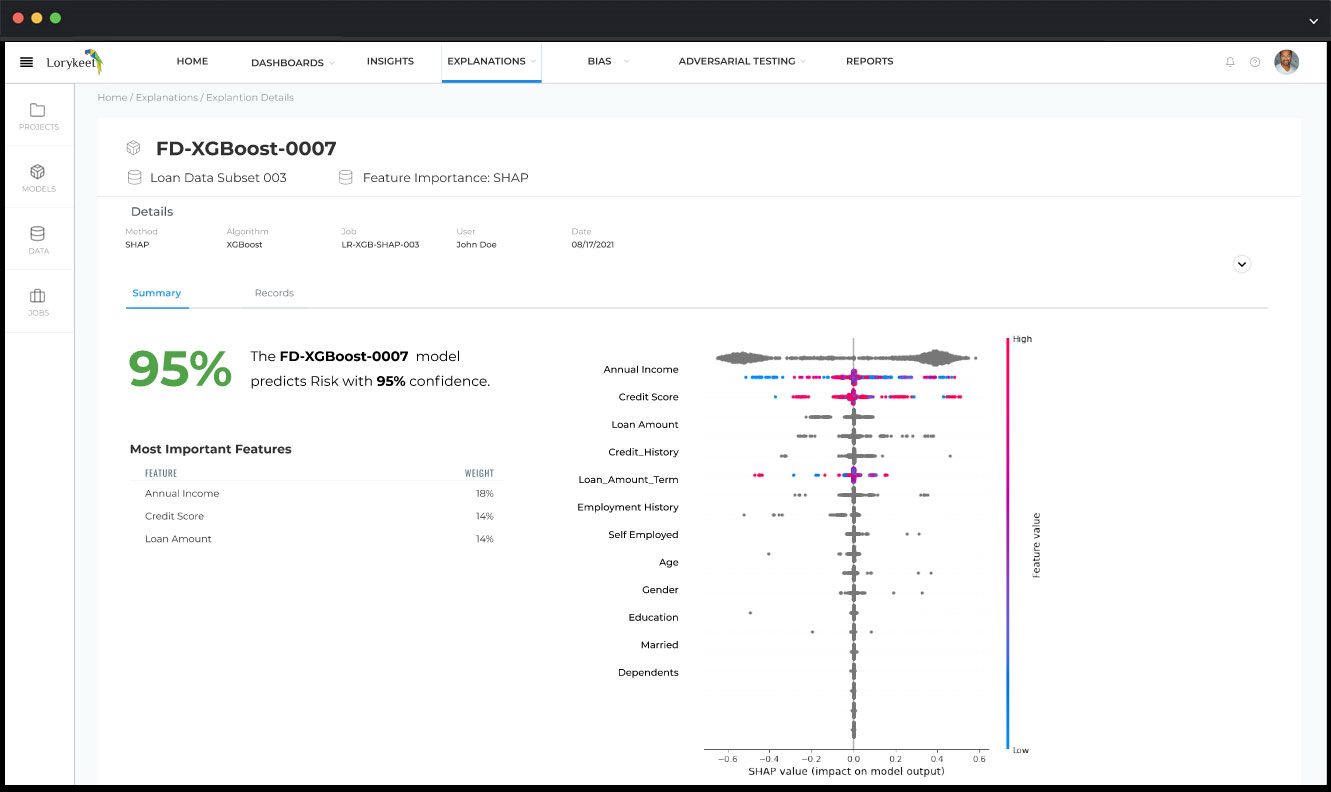

Make your AI Transparent and Trustworthy by using

Explainable AI (XAI)

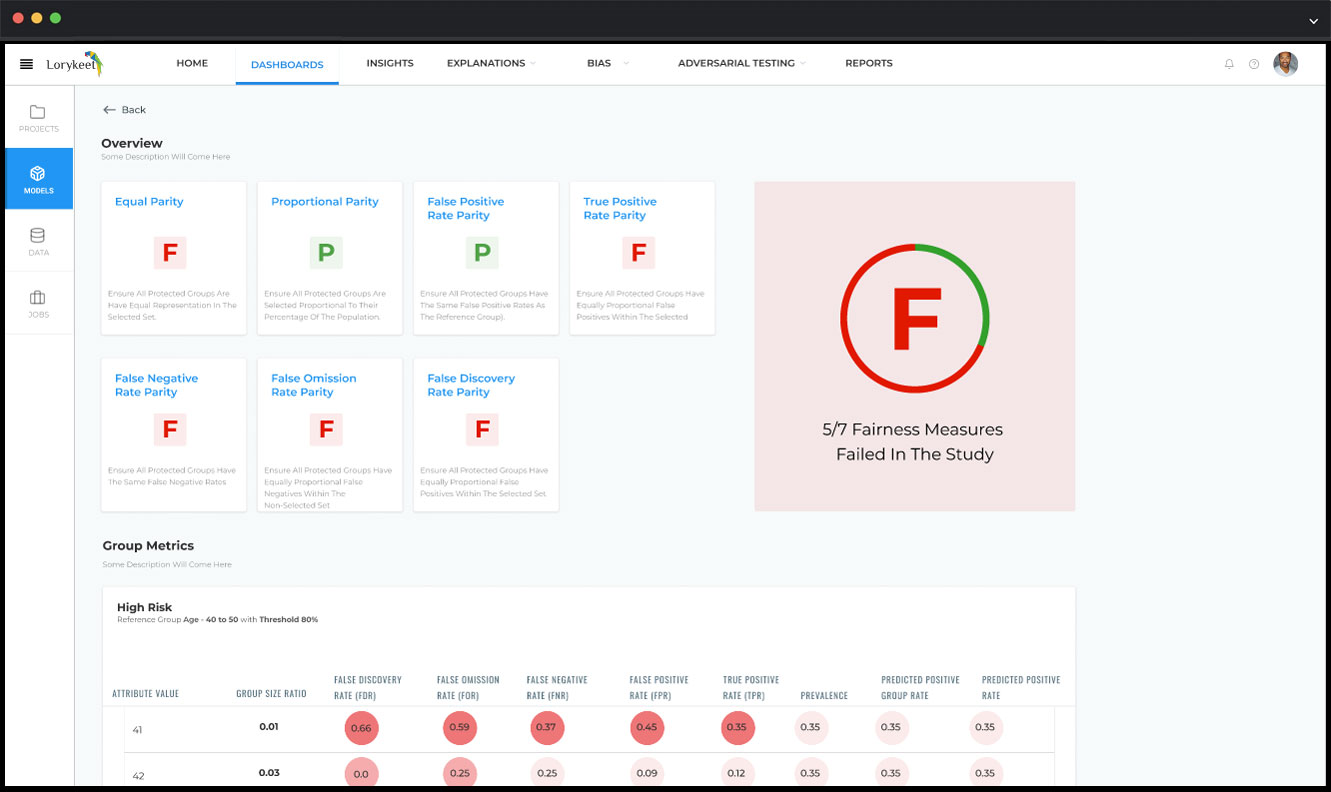

Reduce Bias Risk with Proactive Detection and Mitigation

Establish proper guard rails to detect and reduce algorithmic bias that can result in societal harm and brand reputational risk. Ensure multiple Bias testing methods are used for validation and accuracy of results.

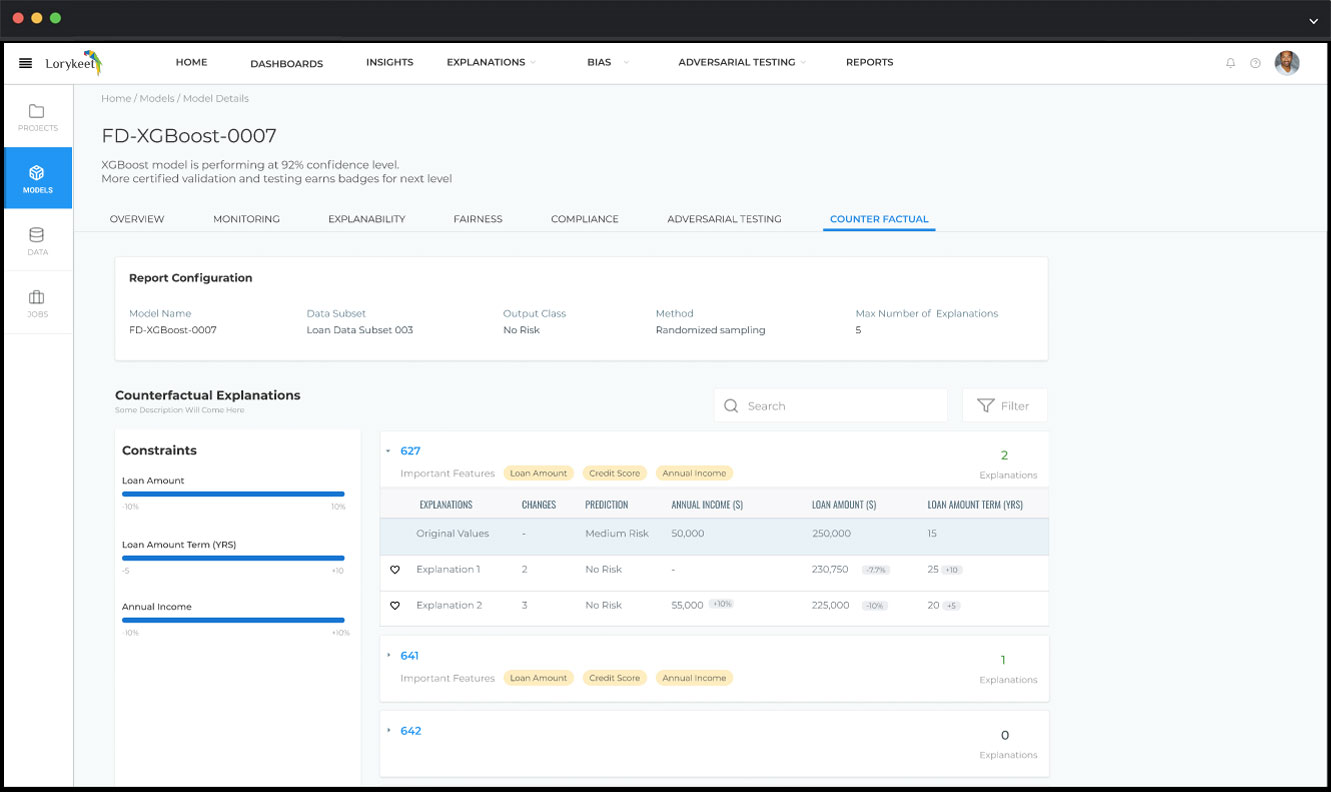

Perform “What If” Analysis for Actionable Insights

Understand what and to what extent changes should be made to certain features to be able to reach the desired outcome. Using counterfactual explanations now you can explain the model based on the simple and clear statement: “If X had not occurred, Y would not have occurred”.

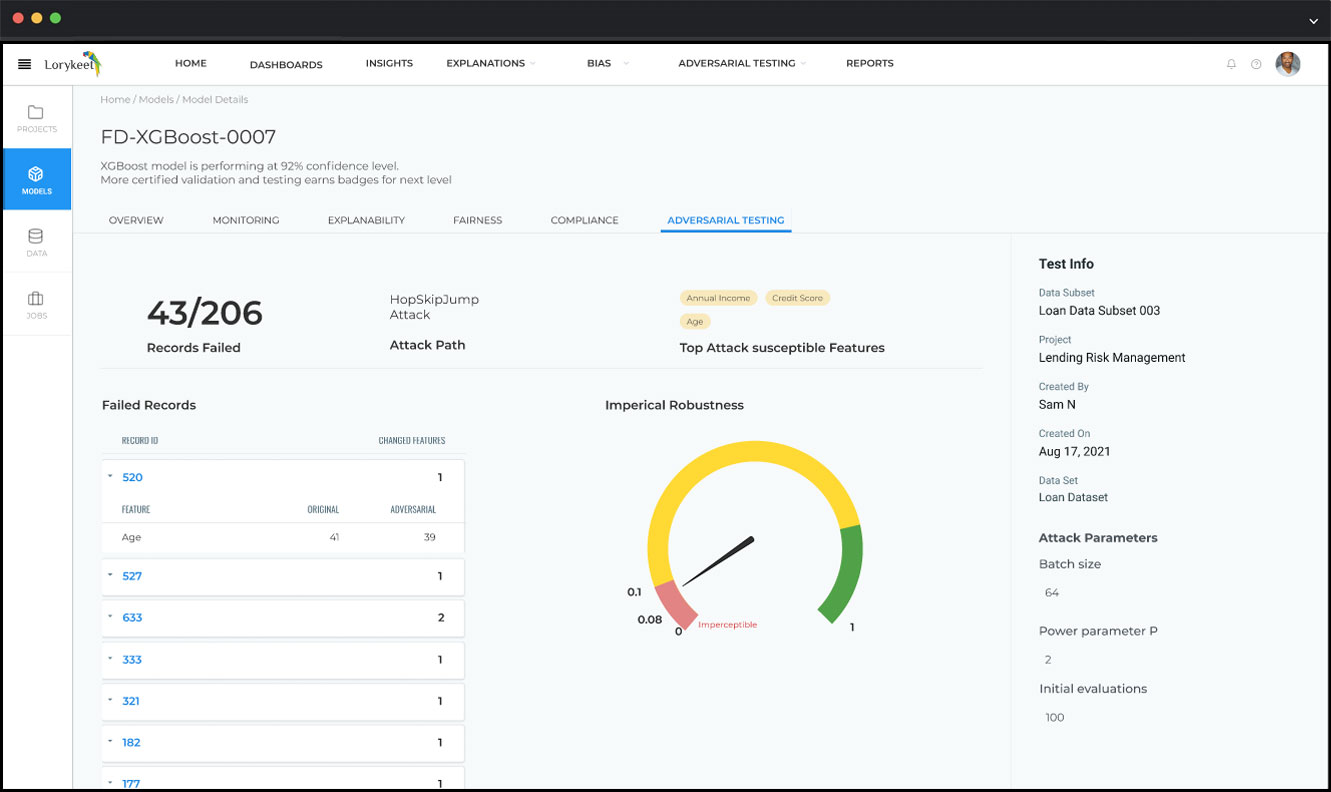

Test for Adversarial Attacks and Ensure your Models are Secure

Reduce vulnerability and defend your AI systems against Adversarial Manipulation and Attacks. Many modern machine learning algorithms can be broken in surprising ways. Be prepared to proactively test them for vulnerabilities.

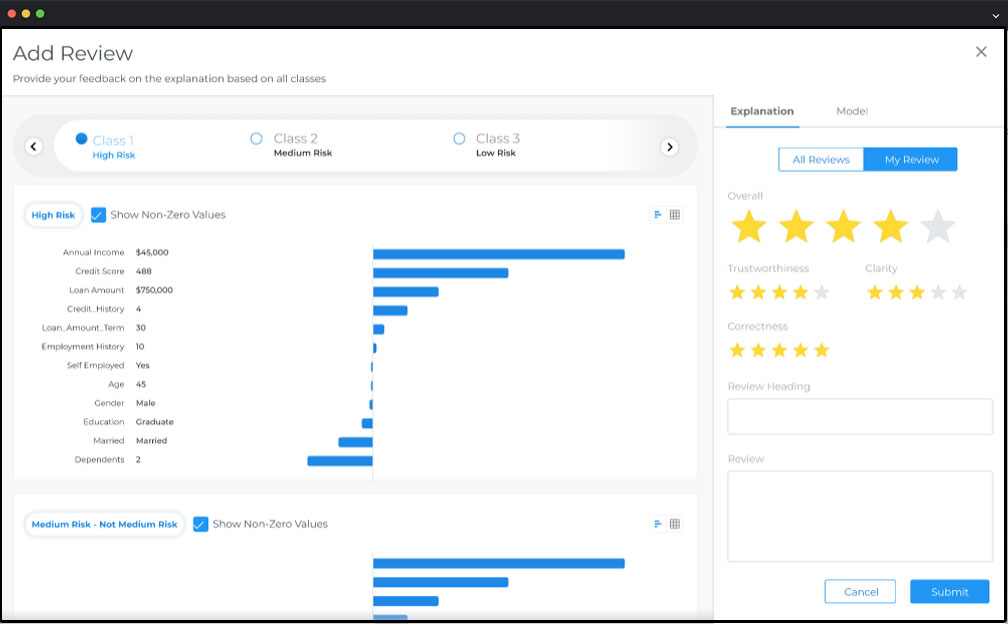

Benefit from Machine + Human Collaboration and Oversight for Validated and Trusted results

Facilitate selective human interference and oversight. See beyond the AI machine’s automation of just “0’s” and “1’s”. Take account of the reality which is more like a spectrum that needs human expertise, intelligence and conscience for trusted correct outcomes.

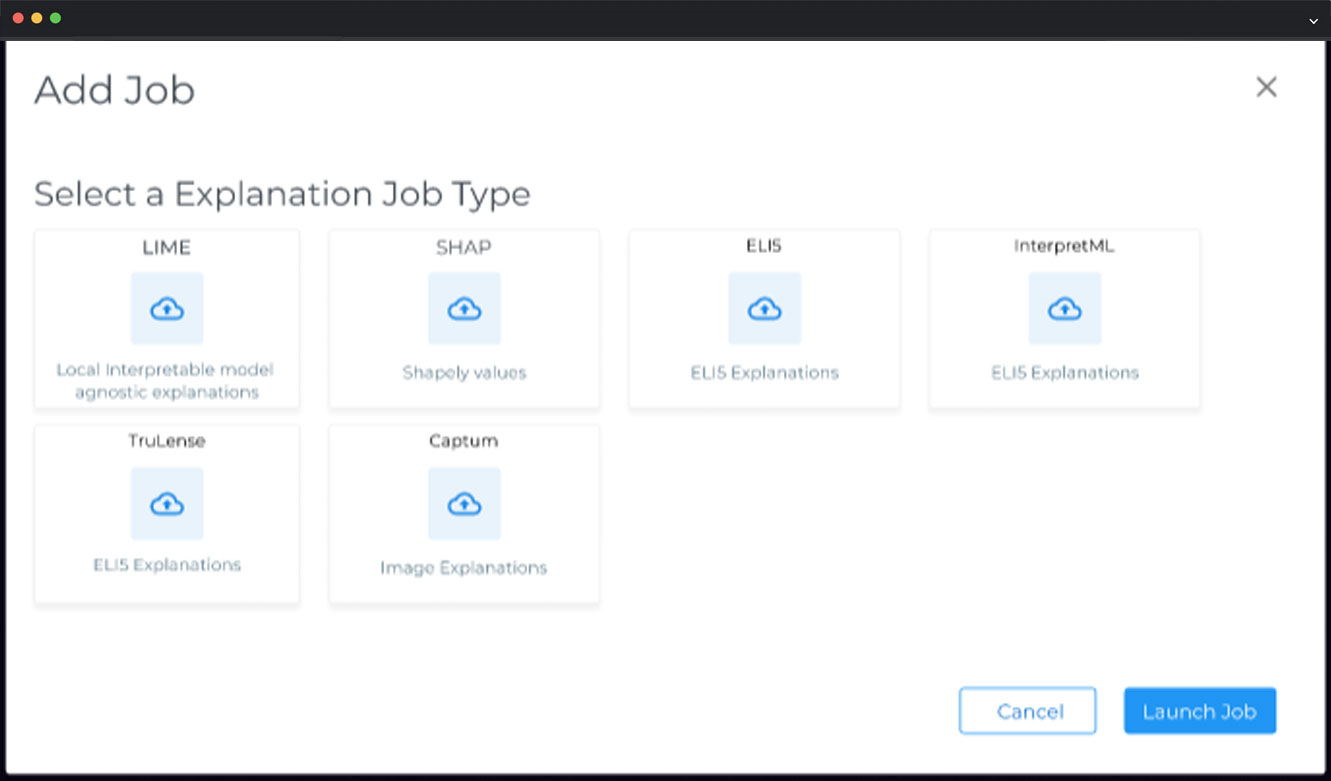

Auto Scale the Platform with an Open AI Assurance Catalog Including Bring Your Own Method (BYOM)

Realize that there is no “silver bullet” method for AI Governance and Risk Management. Ensure that a variety of trusted methods are used for validation of AI outcomes across the AI and machine learning governance lifecycle.

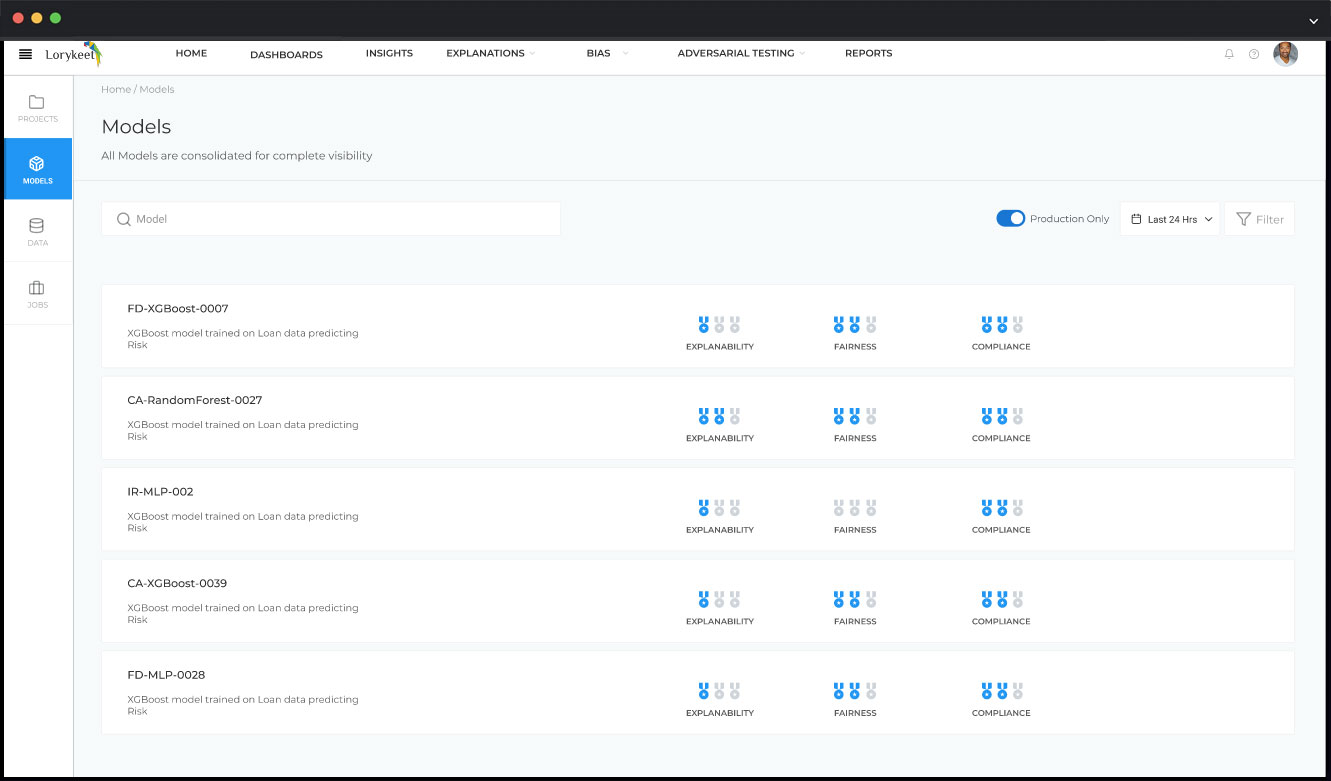

Monitor your Machine Learning Models for Model Assurance and Compliance

Stay compliant with local, country and global AI rules and regulations and reduce risk of regulatory fines and lawsuits. Proactively manage your machine learning models for compliance by performing internal model risk validation and audits.